The dramatic transformation of service delivery to the cloud touches business users and consumers alike. Business services in the cloud have become virtualised and elastic. Evolving consumer services dominated by on-demand streaming services are now hosted in the cloud. The growth of mobile broadband and the development of the Internet of Things continue the transformation. But one thing remains the same: an ever-increasing expectation of quality.

At the heart of the cloud are data centres where storage and compute resources host services and applications, as well as the networks that interconnect with other data centres and end users. Not only is the need for an ever-increasing amount of bandwidth driving how transport networks between data centres are built, but the dynamic nature of service support in the cloud is also driving the need for a programmable and more flexible transport network to match the inherent flexibility in the storage and virtual compute resources provided by the cloud of data centres. This article explores different cloud transport network requirements, especially the use of reconfigurable optical add drop multiplexers (ROADMs) in data centre transport networks, and their role in enable flexible dynamic networking for cloud network deployments.

Cloud transport network needs

The requirements for cloud transport networking mirror what’s needed in cloud data centres as they evolve in terms of scale, flexibility, capability, and applications. The five key transport networking requirements are:

lHigh bandwidth – the need for high bandwidth transport between data centres and end-users continues to grow, driven by applications and the growing number of end users. This figure will continue to grow as more machines connect to cloud applications. Depending on the application, there are also additional requirements for low latency and low jitter. Dense wave division multiplexing (DWDM) transport systems are usually deployed for connecting data centres. DWDM and a variety of other networking solutions connect end users to cloud data centres.

lPower efficiency – optical transport systems must efficiently transport large amounts of bandwidth with minimum power consumption. Minimising the number of systems, reducing the number of interfaces, employing low power digital signal processors, and enabling transport signals to remain optical to avoid electrical regenerations, all contribute to reduced power consumption.

lSecurity – the importance of a secure network has grown as services are now distributed in geographically diverse data centres. Because optical transport networks are inherently optical, there is a built-in measure of security in terms of how difficult it is to snoop or decode information. Additional security measures are required for data plane transmission, network control and management plane, and system access.

lResiliency – transport network resiliency that enables application and connection continuity is very important, especially when considering the large amount of traffic and services supported by optical transport networks connecting data centres and end users. Geographic path diversity and more mesh interconnections improve the ability of transport networks to quickly recover from path problems. It is important that resiliency is implemented in a way that does not increase power consumption to support more systems or interfaces, but instead uses existing interfaces as much as possible for protection, restoration and disaster recovery. Optical switching is a low-power approach to this requirement.

lProgrammability – In addition to dramatically increasing bandwidth requirements, services are becoming more dynamic, generating the need for a greater variety of connectivity options and more flexible and responsive network behaviour. This area is still poised to undergo further transformation from today’s mostly rigid networking. Programmable networking can provide the flexibility and scalability necessary to support diverse and customised offerings while being able to dynamically adapt as conditions and requirements change. Use cases include bandwidth on demand, data centre backup, maintenance, and more.

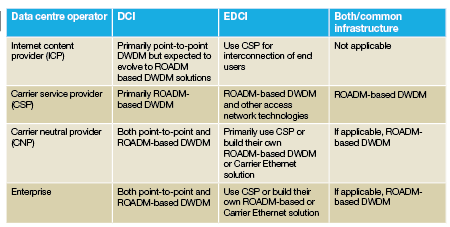

The nature of the data centre operator also influences cloud transport network requirements and the methods deployed for interconnecting data centres and end users. There are essentially four segments of data centre operators, which vary in terms of business models, services provided, and transport network requirements:

lInternet content providers (ICP) – are focused on the creation, storage and delivery of content. ICPs may build their own inter data centre transport network, or lease fibre or connections from carrier service providers (CSP). Delivery of an ICP service to the end customer usually takes place over a network provided by a CSP. Services and applications provided by ICPs are often referred to as over-the-top (OTT) services. Examples include Google, Amazon, and Facebook.

lCarrier service providers (CSP) – in addition to data centre services, CSPs provide a broad range of communications and networking services. They own fibre infrastructure and build transport networks for both their data centres and for other data centre operators. CSPs are also the main provider of connections from end-users to all data centre service providers. Examples include NTT, Verizon, AT&T, and Comcast.

lCarrier neutral providers (CNP) – provide data centre infrastructure, power, colocation and interconnection for other ICPs, CSPs and enterprises. Examples include Equinix, 365 Data Centers, Dupont Fabros, and EdgeConneX.

lEnterprise verticals (EV) – are enterprises that build their own private data centre networks or use another entity like a CNP or CSP to host their applications and/or provide the networking. Examples include financial institutions and hospital networks.

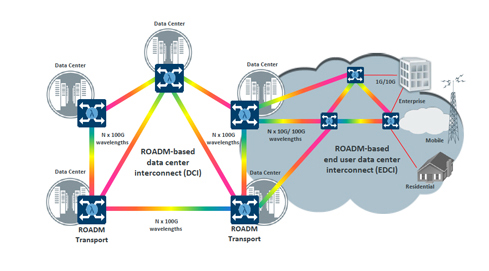

No matter the type of operator, there are two kinds of transport interconnections required for cloud services – those between data centres and those connecting the end customer to the data centre. Interconnection into the cloud of data centres must also connect to a specific data centre, but applications or services may move within the cloud. At the same time, the end user’s entrance point may also move. An adaptable end user access transport network or flexibility within the data centre to data centre transport network is required to support all these connections. We will come back to this more advanced requirement later, but for now delve into some basic requirements for both types of transport connections.

The other data centre interconnect

What primarily defines data centre interconnect (DCI) is the requirement for very-high bandwidth connections between the data centres. (Since there is some potential for ambiguity on whether DCI also refers to the connections between end users and the data centre, for the purpose of this article, DCI is only used to refer to data centre to data centre connections.) Depending on the data centre operator, the scale of the cloud-based services provided, and the number of data centres, the bandwidth required between data centres can range from multiple 10G to multiple 100G connections. In the case of hyperscale data centres, the DCI bandwidth has already transitioned to multiple 100G connections and will continue to increase, both in number, and in rate, to 400G and beyond in the future.

How many data centres that need to be interconnected is another important factor that drives the type of transport network. For ICPs, the number of interconnected data centres can be small. A good example is Google. As of July 2015 the firm has 12 large global data centres with an additional two announced, but not yet active, that support search, Gmail and YouTube cloud applications, according to the company’s website. Contrast this with Verizon, a CSP that currently has 43 global data centres providing ‘hosting, colocation, cloud, and IT infrastructure solutions’ as per the company’s website.

Given all of the requirements discussed above, fibre-based DWDM solutions are the primary transport technology used to implement DCI transport networks. Many of these networks are built today using point-to-point DWDM connections between the data centres, especially for long-haul interconnections. In the case of metro DCI where there are more interconnected data centres that support connections to content, internet peering and also connect to end-user networks, ROADM deployments have gained traction.

In the future, bandwidth requirements will continue to grow and more focus will be placed on building greater resiliency into cloud services and DCI transport networks. This resiliency, both in terms of the data centre service functions and the DCI network, is not only to protect against the failure of individual fibre connections, but also against other potential catastrophic, natural or man-made events that may have a more far reaching impact to the ability to maintain service.

To improve the service experience, especially for services that require high bandwidth, the distribution of data centres closer to the end user is under evaluation to improve factors like latency, but also to reduce the overall transport bandwidth that is required in the aggregation part of network. This would have far reaching effects on the DCI network and would put a strain on building point-to-point DWDM networks that will not have the flexibility, manageability, low power consumption, and required cost profile as the number of meshed data centres increases.

Connecting end users with EDCI

While less high profile than the interconnections between data centres, end user to data centre interconnect (EDCI) is just as important since, without the right connection, users cannot exploit the benefits of cloud services to their fullest extent. One of the key differences in connecting enterprise and consumer end users to the cloud is that in most cloud-based applications there are many more user connections to data centres than connections between data centres. In fact, the connections are much more diverse, not only in number, but also in terms of variance in speed and access type – whether enterprise location connections, mobile network connections or enterprise and consumers connected through Internet service provider connections. In most cases, the EDCI connection bandwidth requirements are lower than DCI bandwidth requirements. Specific data centre applications also place requirements on the EDCI. An example is the requirement for low jitter and latency, and a high-bandwidth connection required for consumers watching streaming video service in real-time versus the much lower bandwidth required for a Google search instance.

The majority of end users are connected to the cloud via services from CSPs. Note that these services are networking type services and are not necessarily ‘hard linked’ to specific cloud services, but instead, are probably part of an Internet access service. There are exceptions to this for some enterprise cloud-based services built as private networks where connections to end users may be included in a common network infrastructure. Unlike DCI connections that are in the 10–100 gigabit per second range, many of these EDCI connections range in the much lower megabit per second range. Common CSP services connecting end users include Carrier Ethernet, VPN, Internet access and mobile cellular data service.

Transport hardware for EDCI includes DWDM packet transport systems, Carrier Ethernet equipment, MPLS-TP based packet transport nodes, DSL systems, cable data modem systems, mobile wireless solutions and IP/MPLS routers. It is also common to have a combination of platforms and technologies, with an edge platform feeding a packet-optical transport system for aggregation and core transport and routers at the data layer for support of switched VPN services.

ROADM-based DWDM packet optical transport can meet the requirements for EDCI, DCI and the varying requirements from the different data centre operators, both today and as they evolve. DWDM transport systems are suited to handle the large bandwidths required in data centre applications, but it is the addition of flexible ROADM capabilities that provides the next level of value.

How ROADMs add value

ROADMs are DWDM optical components that enable wavelengths to be added, dropped and multiplexed locally or passed through, all under software control. In terms of network architecture, multi-degree ROADMs enable optical express where wavelengths can be passed through optically, without an optical-electrical-optical (OEO) regeneration, to dramatically reduce the power consumption and cost of optical transport.

There are various ROADM architectures with different levels of optimisation and flexibility to support a wide range of applications in terms of capacity and resiliency requirements. Essentially there are three key types:

lColoured ROADM – uses a broadcast-and-select architecture enabling optical express wavelengths from any degree to be passed to any other degree, but has limitations for local transponder add/drop wavelengths of fixed ports for specific wavelengths and fixed degree direction tied to fixed ports. This technology is the most cost-effective type of multi-degree ROADM, but has limits on flexibility that may require manual intervention to change how transponders are connected.

lColourless and directionless (CD) – based on route-and-select architecture and adds the capability that any transponder add/drop wavelength can be assigned to any add/drop port or degree of the ROADM enabling changes to be made via software for how transponders are assigned to wavelengths and degrees. CD ROADM architectures are more expensive than coloured ROADM architectures, but the flexibility offered proves in for larger, more mesh-based network architectures. Another important capability of CD ROADMs is support for flexible channel spacing, known as ‘gridless’ or ‘flexigrid’.

lColourless, directionless and contentionless (CDC) – also based on route-and-select architecture, but resolves the blocking problem with CD ROADMS when wavelengths of the same frequency are added/dropped from different degrees. (In the case of CD ROADMs, this has to be managed and can lead to stranded non-usable wavelengths in some directions.) CDC ROADMs also support flexible channel spacing. A CDC ROADM network enables non-blocking mesh architectures.

ROADM architecture and technology will continue to evolve to support additional flexibility in the future. One form of this will likely be additional modularity in terms of the capability to support pluggable optical components in terms of ROADM building blocks like wavelength selectable switches, splitters, and so on.

With the support of optical express and wavelength switching, ROADMs can best manage high bandwidth interconnections at lower cost points by maintaining the data signals optically for as long as possible in the network. Elimination of expensive electrical regeneration can save as much as 30 per cent in equipment costs. This becomes more important as the number of data centres increases and the trend towards distribution of more data centres towards the edge of the network continues. In addition, route-and-select (CD and CDC) ROADMs with gridless capabilities support the evolution to super-channels to transport very large DCI bandwidths greater than 100G. While the costs of these ROADMs are higher than point-to-point DWDM systems or broadcast-and-select ROADMs, when transporting 100G or greater bandwidths the relative cost of gridless ROADMs is much less a factor in the overall costs of the transport solution compared to the costs of electrical regeneration of multiple 100G or greater optical links.

These same ROADM-based optical express and wavelength switching capabilities also lower power consumption by keeping signals optical for as long as possible, reducing the number of interfaces required from DWDM transport systems, but also of interconnected routers. ROADMs can balance signal power levels more accurately than manual balancing and provide better overall optical performance in the network resulting in lower power consumption.

In terms of resiliency, ROADMs enable resilient, high-bandwidth networks to be built that can use existing interfaces for protection. In a mesh-type DCI or EDCI architectures, ROADMs with the addition of control plane capabilities enabled through SDN or GMPLS, support rerouting of the optical wavelengths when a failure occurs. In many cases, this type of resiliency does not require costly dedicated redundant interfaces and also lowers power consumption by leveraging existing interfaces as much as possible.

ROADMs are also important for data centre transport applications in terms of enabling a flexible and programmable network. By eliminating manual intervention and dramatically simplifying network engineering, ROADMs can accelerate service activation times by as much as 80 per cent. Ultimately when coupled with SDN control, the transport network can be adjusted as required for calendaring, data centre backups, maintenance and ultimately to support applications like bandwidth on demand.

Summarising the value of ROADMs in terms of DCI and EDCI transport applications, ROADMs add value in the following ways:

lDCI – lowers power consumption, better resiliency, more manageable, easier to change, as mesh size increases and ultimately for tying the flexibility and programmability of the network to the flexibility and programmability of the data centres. Today broadcast and select ROADMs are employed in small DCI networks and in the future, with the growth of 100G and beyond wavelength interconnect, CDC ROADMs will be deployed.

lEDCI – lowers cost of large access and aggregation networks to connect many end users to cloud data centres directly or through the Internet. ROADM deployments for EDCI applications have primarily been broadcast-and-select ROADMs.

lCombined DCI and EDCI – there are cases where both DCI and EDCI transport applications are built with a common network infrastructure. ROADMs add value in these cases for all the reasons stated above. Many of the cases where ROADMs have been deployed today for data centre transport applications are actually for both interconnecting data centres and connecting end users all from the same network infrastructure. The flexibility and cost savings of ROADMs really stand out in this combined application.

In summary, ROADM-based transport networks can enable very high bandwidth support, increase network resiliency, lower power consumption, and support multi-service flexible bandwidth. While the case can already be made that ROADMs have a place in both DCI and EDCI applications, the final point to make is that ultimately cloud services are about changing the rigid service delivery model of the past and making the network as flexible and programmable as the applications. ROADMs help enable this vision.

Bill Kautz is director of strategic solutions marketing at Coriant