Cost and compatibility can make a compelling case for pushing 100Gb/s bandwidth over a single optical channel, both as individual links and supporting 400Gb/s Ethernet, finds Andy Extance

Cloud data centres bring valuable services that many of us are progressively accepting into our daily lives – and in doing so, push optical communication’s limits. ‘The premier league cloud data centres have this tremendous appetite for more bandwidth,’ comments Andy Bechtolsheim, chief development officer at Arista Networks in Santa Clara, US. They expect their bandwidth needs to double every two years or so, he says.

‘400G is the best way to deliver that kind of bandwidth cost-effectively,’ he adds. Annual global cloud traffic is set to exceed 14 zettabytes by 2020, Mark Nowell, distinguished engineer at Cisco in Ottawa, Canada wrote in a company blog in January. He calls that ‘astounding’ considering that the world only reached one zettabyte a couple of years ago. Consequently, 400Gb/s Ethernet systems are currently being built and tested featuring eight 50Gbit/s optical channels. These systems follow specifications such as the Octal Small Form Factor Pluggable (OSFP) or Quad Small Form Factor – Double Density (QSFP-DD).

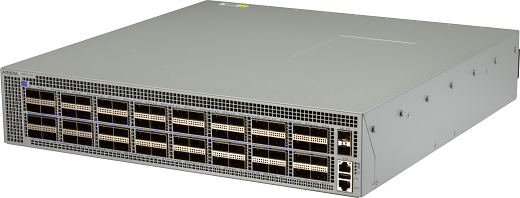

Arista Networks plans to evolve its data centre switches, such as the 7260CX3-64 shown here from its current 100GbE formats to 100G serial

‘As an industry we’ve not built eight-lane technologies before,’ Nowell tells Fibre Systems. As chair of both the IEEE group devising the 803.2cd standard and the 100Gb/s lambda multi-source agreement (MSA), he has led work enabling 100Gb/s single channels. Nowell says he expects that eight channels of existing technology will not cost less than four 100Gb/s single channels, even though producing the latter is technically challenging. That 100G serial, or 100G lambda, capability could also help reduce costs for 100Gb/s Ethernet connections currently comprised of 4 x 25Gb/s lanes. ‘The four-lane solutions are getting high volume and a lot of economies of scale and maturation, so those costs are coming down,’ he says. ‘But the expectation is that 100G lambda technology is going to prove to be lower cost.’

Higher speed

Currently most four-lane 100Gb/s systems have 3.2Tb/s or 3.6Tb/s switch chips at their electronic core, Nowell explains. Companies can attain 12.8Tb/s or higher by adopting the latest 7nm silicon lithography fabrication node, ‘but we still have to get the signals on and off the chip’, he says. It’s not possible to double the number of electrical signals as the number of balls through which the signals pass is already close to the maximum possible in standardised module sizes. Therefore, to support the growth in total application-specific integrated circuit (ASIC) bandwidth, the electrical input/output signals must be higher speed. Specifically, the electrical serialisation/de-serialisation (SERDES) signal speed must accelerate. The whole industry is moving from 25Gb/s to 50Gb/s SERDES on the switching systems’ circuit boards for the next generation, Nowell explains, ‘and we’re all planning on moving to 100Gb/s SERDES in the future.’

The good news is that board SERDES and optical SERDES don’t have to match. ‘For the first generation of 400Gb/s Ethernet based on 100Gb/s lambda, you would have this eight-lane electrical interface, which is called 400GAUI-8,’ Nowell says. ‘This is still the ASIC based on 50Gb/s SERDES. Then a “gearbox” chip translates from eight lanes down to four.

The Ethernet standards are written to allow that, because we need to allow these two interfaces to change at different times. We typically see SERDES at the high-speed optics end first, then it migrates back into the big switches.’

Steffen Koehler, senior director, marketing at Finisar in Sunnyvale, California, emphasises this erodes the 100G serial cost benefit gained from reducing the number of lasers. ‘Savings in lasers is somewhat offset by a greater expense per laser, and also by the need in the first generation of 100G serial optics for gearbox ICs to multiplex multiple electrical data streams to a higher serial data rate. The choice of what serial data rate is lowest cost depends on factors like manufacturing volume, distance being served and technology being used.’

Steffen Koehler, senior director, marketing, at Finisar

Cost is king

Consequently, Finisar introduced 400G-LR8 and 400G-FR8 transceivers with 10km and 2km reaches respectively, using the eight-lane QSFP-DD form factor at the OFC conference in San Diego, US in March. Yet at the same time it introduced a serial 100G-FR QSFP28 form factor transceiver with a 2km reach. Finisar also plans to introduce a 500m reach 100GDR version of this module, which Koehler underlines will interlink the 400 Gb/s Ethernet and 100G serial offerings.

‘Four separate 100G-DR serial transceivers can be used to communicate with a single 400G QSFP-DD DR4 transceiver when used in a so-called “breakout” configuration, so these are complementary products,’ he says. ‘Finisar’s broad portfolio covers shortwave multimode and longwave single mode applications. It intends to continue its long tradition of pushing the forefront of optical transceiver speeds to serve the evolving needs of our customers. To do this, a mix of different technologies is required for different applications, all with the goal of reducing our customers’ overall data connection costs.’

Koehler also stresses that Finisar’s 50Gb/s and 100Gb/s lanes use PAM4 encoding, instead of the traditional non-return-to-zero (NRZ) format, which he sees as crucial in meeting bandwidth demands. The PAM4 modulation scheme doubles the bit rate without increasing the baud rate, by using four signal levels instead of two, he says.

‘The trade-off is that each PAM4 level is harder to detect than an NRZ level,’ Koehler explains. ‘This higher-order modulation scheme is enabled by low-noise lasers and accompanying IC technology. Finisar has been a leader in both the technologies and the standardisation behind PAM4-based communications links. 50Gb/s PAM4 and 100Gb/s PAM4 enable data centres to grow to increased throughput rates with a much lower cost than brute-force increases in baud rate. With the combination of higher baud rates and PAM4 modulation, the bandwidth is increased at the lowest extra cost. This allows much richer data centre fabrics, and will enable advanced processing functions such as machine learning.’

Arista’s Bechtolsheim adds: ‘When choosing next generation 400G optics, the three things customers are most worried about are cost, longevity, and compatibility with the installed base of fibre cabling. 400G optics based on 100G lambda address all of these concerns. First, optics with four lambda of 100G will be lower cost than optics with eight lambda of 50G. Second, 100G lambda optics are forward compatible with merchant switch silicon that has 100G SERDES serial interfaces, and given that 100G serial is the fastest speed that conventional printed circuit boards support, this means that 100G lambda optics will have a very long life. Third, 100G lambda optics work with existing fibre plants. Customers that have deployed 8-fibre cabling for 4 x 25Gb/s 100G-PSMF4 today can transition to 400G-DR4 without making any changes to their fibre plant.’

Andy Bechtolsheim, chief development officer at Arista Networks

Ecosystem upgrade

The Arista executive acknowledges the challenges in developing components for an all-100G serial ecosystem, on the electrical and optical side. ‘It is clear that there is demand for 100G lambda optics starting in 2019 and for merchant switch chips with 100G SERDES as soon as they can be built,’ Bechtolsheim says.

‘There is still some work to be done specifying the 100G SERDES electrical channel between the switch and the optics, in particular the electrical performance of the optics module connector. We have demonstrated 100G SERDES across the OSFP module connector with good margin, which enables up to 28.8Tb/s density per rack unit front panel with 800G OSFP optics modules.’ Nowell notes that there’s a range of different technologies from various companies that the 100G lambda MSA accommodates. ‘The last challenge is that the test methodologies are new.’ However, standard-setting is helping define these required methodologies, Nowell adds. ‘Smart people are refining them. That takes time, but it won’t slow down availability.’

Cisco is anticipating 100G serial and 400Gb/s Ethernet in general being ‘important technology’ Nowell adds. ‘On the platform side, we’re going to have solutions across the routing and switching space, supporting QSFP-DD across all of the modules, including 400G-FR4 and 400GBASE-DR4,’ he says. ‘We expect to have the QSFP28s with 100Gb/s shipping on our platforms as well.’

To help advance 100G serial readiness, the MSA that Nowell leads conducted a private ‘plugfest’ where firms compared equipment with each other this summer. The MSA plans to follow that up with a public interoperability demonstration at an industry show this Autumn.

‘Companies feel that their solutions are ready to be tested,’ Nowell says. Israel-based Multiphy develops 100Gb/s digital-signal-processing (DSP) based integrated circuits that support PAM4 modulation schemes. ‘We’ve very much been focused on developing the 100G serial technology and implementing it in the silicon and DSP,’ CEO Avi Shabtai says. ‘Our work was showing that this is possible, and that you can create an ecosystem, and then also making sure that it gets to mainstream standardisation.’ He’s therefore pleased to see companies ‘marching into 100Gb/s and 400Gb/s Ethernet’, the IEEE standards and the 100Gb/s lambda MSA.

MultiPhy’s MPF3101 DSP chip has helped to prove 100G serial is possible, according to CEO Avi Shabtai

100G serial: killer technology

That indicates cost structure convergence, Shabtai asserts, which would make building modules with a single optical path much cheaper. Costs may be below $1 per Gb/s of optical link capacity, he feels. ‘Once you move to serial interconnect and you have native 100Gb/s, it’s much easier to increase the density of the ports.’ Modules should also be more efficient in power consumption, he adds.

MultiPhy’s approach to supporting the technology in the short term is moving from 25Gb/s to 50Gb/s electrical SERDES. ‘We face the challenges of driving 100Gb/s on a single optical path by applying very unique patented signal processing technology,’ Shabtai says. This helps module makers relax optical component requirements, particularly important for 100G serial.

‘One of the challenges is how also to improve the optics,’ the Multiphy CEO says. ‘Everyone understands the speed at which optics evolves is lower than that of silicon or CMOS technology. That’s because there is less worldwide investment in advancing optics. Transmitting 100Gb/s, there are a lot of issues related to reflections and signal integrity and non-optimised linearity of components. That creates, in many cases, a very challenging channel. We need to apply a lot of signal processing methods and clock recoveries to detect the signals.’

At OFC Wuhan, China’s Accelink Technologies and Suzhou, China’s InnoLight Technology both demonstrated QSFP28 form factor 100Gb/s single-lambda modules integrating MultiPhy’s MPF3101 DSP chip. ‘We’re working with several module companies and system integrators on integrating 100Gb/s single wavelengths,’ Shabtai says. ‘That’s part of the whole wave that’s coming to the industry in the next 12 months. It’s going to be a paradigm shift in the industry.’

Bechtolsheim makes the consequences explicit. ‘According to market research, the market for 100G is still growing rapidly and is projected to exceed 10 million switch ports this year and 20 million in 2020,’ he says. ‘400G is projected to ramp from a few hundred thousand ports in 2019 to more than five million ports in 2021, at which point the bandwidth of 400G ports shipped would exceed the bandwidth of 100G ports shipped. It puts a premium on delivering the most cost-effective 100G and 400G optics and switching technologies to the market as soon as possible.’

Continuing cloud data centre build-outs support such forecasts, the Arista executive adds. ‘The growth in the cloud is simply astonishing,’ says Bechtolsheim. ‘As long as that continues, which we think will be for many years, the demand for faster switches and optics in the cloud will continue to grow proportionally.’

Andy Extance is a freelance science writer based in Exeter, UK