There has never been a better time to be in optical communications. Major communication trends impact everyone’s lives, such as UHD video, cloud services, big data and the move of mobile internet from LTE to 5G. They all reflect themselves in an evolving optical networking architecture and paradigm changes of how and where to use optical gear.

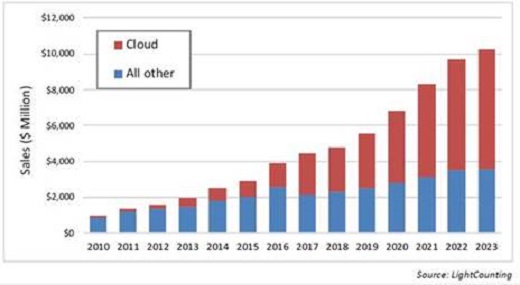

The rise of the cloud has been one of the major success stories in the last few years and, as shown in Figure 1, cloud expenditure on optical gear is projected to dominate the market of Ethernet and DWDM interconnects. This will inherently change product features, development cycles, or manufacturing processes for high-speed optical interconnects.

Figure 1

The industry will have to adapt to a larger degree of scale for its products, which is evident in the move of coherent optics into the high volume datacom market. Here, the key enablers are opto-electronic subcomponents and optical digital signal processing (oDSP) algorithms.

On one hand, Silicon Photonics has seen an enormous investment over the years based on the premise that one day one could print optical functions the same way that transistors are being designed in CMOS – repeatedly and with a very high yield, thus blurring the boundaries of where electronics end and optics begins. On the other hand, the oDSP algorithms will remain at the heart of optical innovation.

Maximising capacity

In the same way that optics are absorbing former domains of copper transmission, oDSPs are carrying more and more functionality to maximise the capacity per given physical interface and expand the application scope of optical interconnects to new domains.

Since the first coherent oDSPs went mainstream with 100Gb/s long haul interconnects, the industry race was on for higher performance using advanced forward error correction (FEC) or equalisation techniques, and higher throughput with advanced modulation schemes. After 10 years of progress, innovation seems harder to come by. The industry started hearing claims about reaching theoretical limits, referring to the boundary derived by the founder of communication theory, Claude Shannon.

Indeed, the net coding gain achieved by FEC is becoming saturated and combined with the latest industry achievement of probabilistic shaping, brings the architecture very close to the text book performance limit of a linear, additive noise communication channel. Luckily, the passion of every engineer is defined by the desire and ability to think outside the box and break any stated limits. Such a stated limit arose when researchers were faced with the problem that an interconnect, which reaches 2,000km in the lab, often ends up with a reach of 500km in a deployed legacy fibre.

Channel matched shaping

There is a multitude of new techniques which are designed to further push the performance in real-life scenarios of deployed fibres. The concept of channel matched shaping (CMS) focuses on the impairments of the live networks, such as ageing or stressed fibres, deteriorating components, non-linear effects and weather phenomena like lightning, which can all lead to time varying degradation of the channel.

CMS senses these impairments and optimally adjusts the signal processing, in order to compensate for the impact and, if needed, adjust the transmission rate. Optical signals used to be like ice cubes – inflexible and hardly adaptive. CMS makes the fibre link feel like water flowing through a pipe, adapting to the shape of the channel. As Bruce Lee once said: ‘You must be shapeless, formless, like water. When you pour water in a cup, it becomes the cup. When you pour water in a bottle, it becomes the bottle. Water can flow or it can crash. Become like water my friend.’

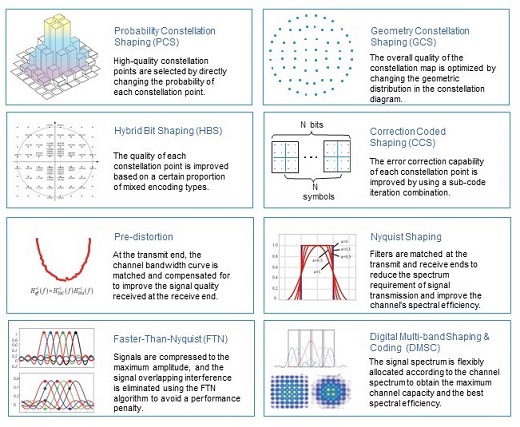

In addition to conventional constellation shaping techniques, CMS uses hybrid bit shaping and coded constellation shaping, and combines these with channel filtering techniques such as Faster-than-Nyquist or multi-carrier transmission with individual shaping and coding per subcarrier, as shown in Figure 2.

Figure 2

A performance-improving combination

By using a combination of different techniques to address specific problems in real networks, CMS is designed to resolve the bottleneck in a transmission channel and improve performance. In this way, when going from lab to live, the performance of the transmission can be greatly improved on top of the text book promises of an FEC or probabilistic constellation shaping.

Another important trend is the disaggregation of the network functions to a varying degree, such as the separation of line terminals and optical line systems, or physical and control layers. This trend is still under heated discussion and considered to be well suited for datacom networks, especially for data centre interconnect short-reach applications.

Here, operators are willing to take the burden of additional management and maintenance from the system vendors in order to arrive at more generic network elements using a unified control or management layer based on SDN. It is design of optical networks may not be able to reap the full benefits of an optical network evolution towards integrated fully autonomous and adaptive systems, with jointly designed, as well as co-optimised, physical and control layers.

Autonomous and adaptive networks will increasingly rely on AI in order to learn about the network state and predict disruptive events like an optical reroute or a channel capacity downgrade. These predictive capabilities will make it possible to adapt the network in a non-disruptive manner, e.g. using maintenance windows for the reroute optical connections, an upgrade of line cards, or clearing potential malfunctions before the service is down.

Advanced insight

This AI-powered OAM relies heavily on advanced telemetry in optical networks, which has to provide an advanced insight on all relevant optical network parameters, like span loss, OSNR or fibre nonlinearities, while also being implemented in a cost-efficient manner. Here, optical systems integrated with AI neurons are of paramount importance, delivering a huge input from the optical layer to power the AI-enabled network control, as in Figure 3.

Figure 3

A tight integration of line terminals with the line system and management layer would help operators gain full visibility and unlock link performance, which would be lost in a scenario where optics are treated as generic IT network elements with standardised monitoring interfaces. Combined with innovation on optical components, these network innovations will power optical networks to line rates of 800Gb/s for metro networks and 400Gb/s for long haul on a single lambda. As we start to reach 800Gb/s on single wavelength, systems will get more complicated and a lot more trade-offs must be made. On one hand, you may have more noise if you use a very high-order modulation; on the other, if the baud rate increases above 95Gbaud, you may no longer fit the signal in a single 100GHz channel, unless advanced compression techniques are used.

Driven by the continuous doubling of Ethernet switch ASIC capacities every two years, line rates of 1.6Tb/s should not be far away. At this capacity node, it is likely several paradigm changes will be required for coherent interfaces, such as a more pragmatic parallelisation using cheap laser sources, monolithic photonic chips, the abandonment of pluggability concepts and a move towards the co-packaging of optics, DSPs and switching silicon.

Current demarcation lines between network elements could disappear. After all, at the core of every disaggregation trend is a successful integration of optical and electronic building blocks and innovation on the bleeding edge of technology.

Dr Maxim Kuschnerov is senior R&D manager and Dr Yin Wang is senior marketing manager, fixed network product line at Huawei